Find mass deletions to free up Gmail space

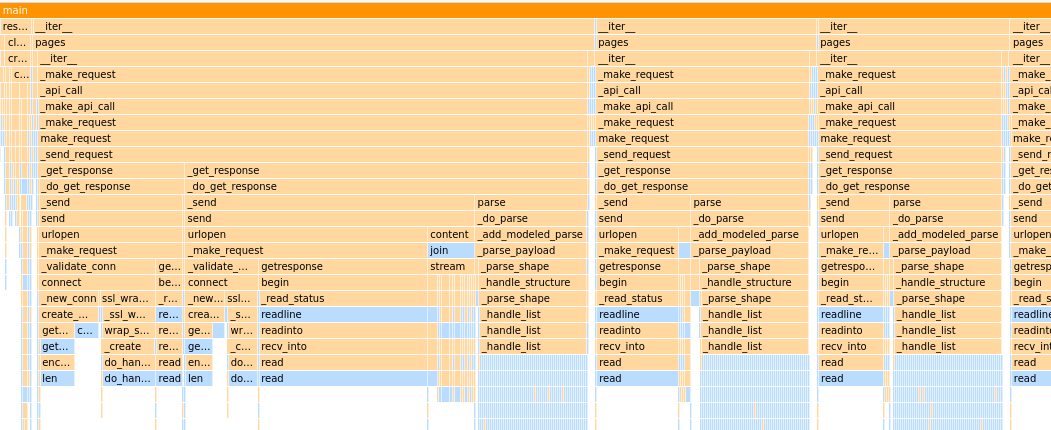

Skip to the final script for a way to find the largest groups of emails in your inbox. I freed up about 10% space just by deleting old notification / newsletter type emails.

Cleaning up my Inbox

My Gmail inbox is getting full

and Google often lets me know:

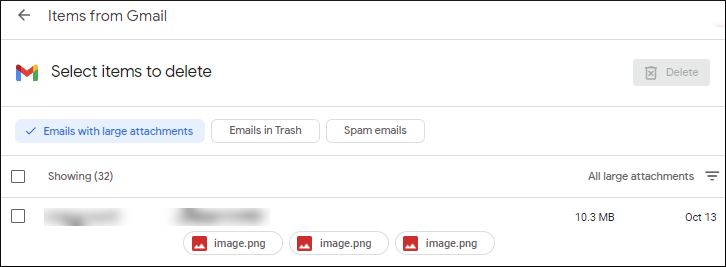

Although Google offers some help cleaning up the cruft -- it's not really in their interest to make the tools too useful.

Their list allows you to delete emails one by one and is similar to searching larger:10M in gmail. You'll eventually clean up space one by one... can we do this faster?

What about all those newsletters, notifcations from linkedin, messages from facebook, etc... that are small individually but together take up megabytes of space?